Public Artifact Leaks: How Attackers Use JavaScript, Error Pages, and Exposed Files to Find Exposure Paths

Fusionstek Research

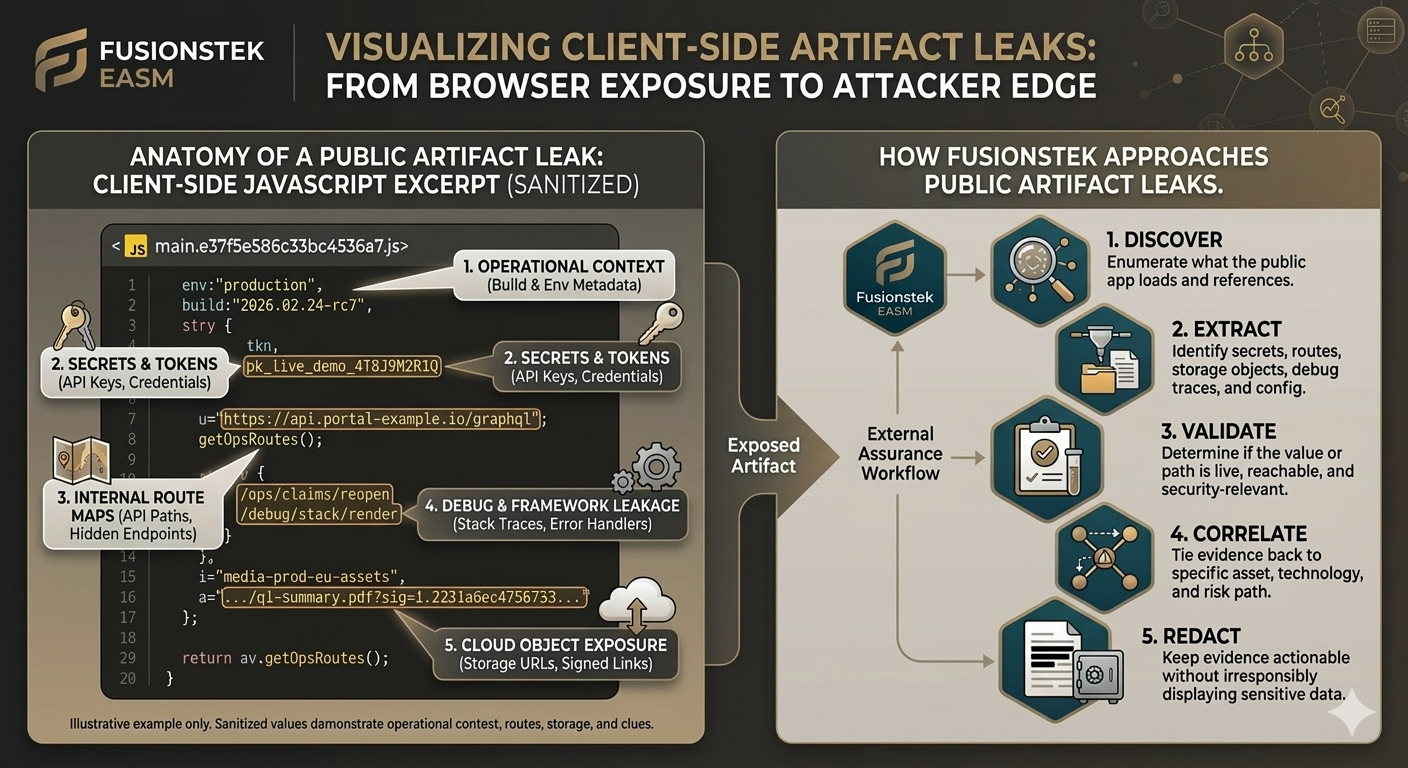

External Exposure Flow

How a Public Artifact Becomes an Exposure Path

Attackers do not stop at the homepage. They enumerate what the browser loads, what the application reveals when it breaks, and what public files expose about workflow clues, secrets, and control weaknesses.

How a Public Artifact Becomes an Exposure Path

Attackers do not stop at the homepage. They enumerate what the browser loads, what the application reveals when it breaks, and what public files expose about workflow clues, secrets, and control weaknesses.

Public App

Start with the domain, load the app, and capture every JavaScript bundle, source map, public asset, and third-party request the browser reveals.

Artifact Leak

Extract tokens, workflow clues, cloud object URLs, debug traces, config values, or service hints from those public artifacts.

Validation

Validate whether the exposed value, signed URL, or sensitive workflow reference is reachable and whether it creates a meaningful control gap.

Exposure Path

Tie the evidence back to the affected asset so teams can prioritize attacker-relevant exposure paths, not just noisy static matches.

Public artifact leaks are one of the most consistently underestimated external attack surface problems. The issue is not just a token in a JavaScript bundle. It is the broader class of browser-accessible files, error pages, build outputs, and debug traces that tell an attacker how your application works before they ever touch a login page.

Attackers do not need internal access to benefit from this. They start with what your own application serves to the public internet. That includes JavaScript bundles, source maps, exposed configuration files, framework error pages, public storage objects, and signed URLs accidentally embedded in front-end code. OWASP's client-side risk guidance explicitly calls out sensitive data stored client-side and proprietary information shipped to the browser as repeatable sources of exposure, not edge cases (OWASP Top 10 Client-Side Security Risks).

See the sanitized bundle excerpt below for a concrete example of how public client-side code can reveal workflow clues, signed object references, and operational context.

What attackers actually look for

There are five categories that matter most in practice:

- Secrets and tokens: API keys, cloud tokens, signed URLs, and service credentials mistakenly shipped to the browser.

- Workflow clues: service paths, staging references, GraphQL endpoints, and hidden workflow URLs referenced in bundles.

- Debug and framework leakage: stack traces, filesystem paths, framework versions, and error handlers that expose internal structure.

- Public build artifacts: source maps, static config files, environment fragments, and downloadable front-end bundles that reveal how the app is wired.

- Cloud object exposure: storage URLs, signed links, object paths, and public artifacts that point to externally observable misconfiguration signals.

Why most teams miss it

Traditional web scanning often stops too early. It checks the homepage, follows a few links, and looks for obvious server-side issues. It does not always inspect everything the browser loads, correlate exposed values to the asset that served them, or explain which leaks are validated findings versus risk signals. OWASP guidance has been consistent for years on this point: client-side code paths, loaded resources, and browser-accessible objects deserve their own testing discipline because they change what an attacker can learn or control from the outside (OWASP WSTG Client-Side Resource Testing).

That creates two opposite failures at once: teams miss real exposure chains, and they drown in low-context secret matches that are technically interesting but operationally useless.

What the attacker sees that the defender often does not

A single public artifact can collapse the attacker's discovery time. A source map can reveal workflow structure. A leaked config file can expose service names. A ThinkPHP debug page can reveal framework version and filesystem paths. A signed storage URL can expose how long a token remains valid and whether access scope is too broad. Even government advisories still document information exposure through error messages as a real risk signal because technical leakage helps attackers plan more precise follow-on activity (CISA: Information Exposure Through an Error Message; CISA: Error Messages Containing Sensitive Information).

The point is not that every exposed artifact is immediately confirmed as a finding. The point is that public artifacts can shorten attacker discovery and create evidence-backed access opportunities that deserve review.

How Fusionstek approaches the problem

We treat public artifact leakage as an external assurance problem, not just a string-matching exercise. The workflow is simple but strict. It is also aligned with well-established defensive guidance: do not place secrets in client-side code, do not rely on the browser for security-sensitive logic, and validate exposure from the same external perspective an attacker uses (OWASP AJAX Security Cheat Sheet; OWASP DevSecOps Secrets Management).

- Discover: enumerate what the public app actually loads and references.

- Extract: identify secrets, workflow references, storage objects, debug traces, and config clues.

- Validate: determine whether the exposed value or path is live, reachable, and security-relevant.

- Correlate: tie the evidence back to the specific asset, technology, and attacker-relevant exposure path it affects.

- Redact: keep evidence actionable without irresponsibly displaying sensitive material.

Examples of exposure classes worth treating seriously

- JavaScript token exposure: front-end bundles containing high-entropy values tied to service or cloud access patterns.

- Source map disclosure: workflow names, modules, or environment-specific clues disclosed through map files.

- Framework debug leakage: stack traces, path disclosure, or framework identity from unhandled production errors.

- Public artifact URL exposure: downloadable files or build outputs that give away implementation details and sensitive references.

- Cloud link overexposure: signed links, public object URLs, or static-site artifacts that reveal larger cloud surface weaknesses.

What this means to the client

The business issue is not “a scanner found a string.” The issue is that a public application revealed enough information to shorten attacker effort or expose sensitive access paths that should never have been available to unauthenticated users.

That is why evidence matters. Security teams need to know which asset served the artifact, what was exposed, whether it was verified, and what should be fixed first. Auditors and insurers often ask for evidence that the issue was identified, reviewed, and remediated.

What teams should do next

- Remove secrets and operational tokens from client-side code and public artifacts.

- Disable production debug output and framework error pages.

- Restrict source map and build artifact exposure where they are not needed publicly.

- Rotate any exposed keys or signed URLs and reduce their lifetime/scope.

- Retest externally so remediation is verified from the same attacker-visible surface.

Why this belongs in an assurance program

Public artifact leaks are not just a developer hygiene issue. They are a repeatable source of external exposure, a real part of attacker reconnaissance, and a strong example of why external assurance has to be evidence-backed and refreshed on a plan- and scope-dependent cadence. If you only look at what the app is supposed to expose, you will miss what the app is actually serving.

For related industry reading on the narrower problem of secrets in JavaScript specifically, see Intruder's research on secrets detection in JavaScript. Fusionstek's framing is broader: public artifact leakage should be treated as an attack-surface and assurance problem, not just a pattern-matching problem.